The Scaling Illusion and the Rise of a Tiny Competitor

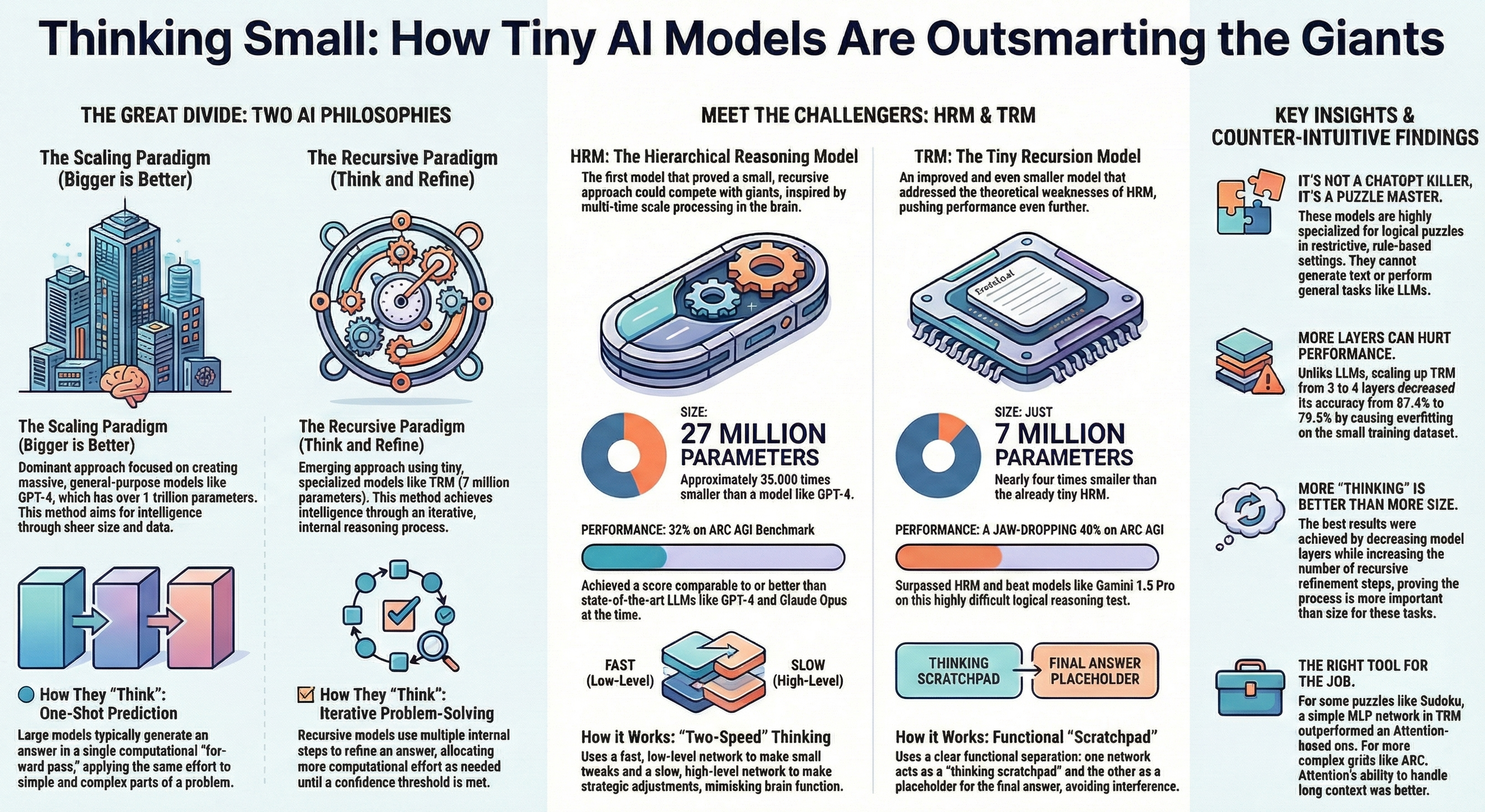

The AI world is currently gripped by a prevailing belief: "The bigger the model, the better." Large companies are spending hundreds of billions of dollars on scaling models, hoping to achieve Artificial General Intelligence (AGI). But what if I told you that a small model with only 27 million parameters has beaten trillion-parameter models like GPT-4 in logical reasoning tests?

This article tells the story of the emergence of Small Recursive Models that have changed the rules of the game.

While Large Language Models (LLMs) struggle with logical puzzles that we call AGI benchmarks, a new and fascinating idea has emerged against this "scaling virus." The idea is simple: What if instead of making the model's brain bigger, we allow it to think before answering and refine its response?

This idea led to the creation of a model called HRM (Hierarchical Reasoning Model). This model with only 27 million parameters (compared to GPT-4's 1 trillion parameters, which is about 35,000 times larger) achieved an impressive score of 32% on the ARC-AGI benchmark, challenging advanced models from OpenAI, Anthropic, and Google.

How Does the HRM Model Work? (The Secret of Slow and Fast Thinking)

The key difference is that regular language models try to predict everything in a single "Forward Pass." This means the effort they put into calculating "1+1" equals the effort for a complex problem.

But the HRM model is inspired by the structure of the human brain. This model consists of two small transformer networks that operate at different speeds:

- Low-level network (Fast): Makes quick, incremental changes on a mental "Scratchpad."

- High-level network (Slow): Determines the overall strategy and decides whether the answer is ready or needs more thinking.

Instead of instant responses, this model thinks recursively and refines its answer multiple times until it reaches the correct result.

The TRM Model: When "Small" Gets Even Smaller!

The story doesn't end here. An even more advanced version called TRM (Tiny Recursive Model) was introduced by a researcher named Alexia, which surpassed even HRM. By removing unnecessary biological assumptions and improving the training method, this model achieved higher efficiency with a size 4 times smaller than HRM (only 7 million parameters).

The TRM model achieved a score of 40% on the ARC-AGI benchmark, beating powerful models like Gemini 1.5 Pro and standing shoulder-to-shoulder with GPT-5.

Why Was TRM More Successful?

Instead of mimicking the mouse brain (which was used in HRM), the TRM model focused on a functional separation:

- A space for the Thinking Scratchpad.

- A space for the Answer Placeholder.

Instead of assuming it reaches an "equilibrium" (a mistake HRM made), this model trains exactly on the loops it executes. The most interesting point is that when researchers tried to increase the model's layers, its performance decreased. In fact, the model's small size prevented it from "Overfitting" on limited data and helped it generalize better.

An Analogy for Better Understanding

Imagine asking two people to draw a complex painting.

The first person (GPT-4): Is a genius who must complete the painting with one stroke of the pen without lifting their hand from the paper. No matter how genius they are, the probability of error is high.

The second person (TRM): Is an ordinary painter, but they're allowed to draw an initial sketch, look at it, erase, correct, and work on details for hours until they're satisfied with the result.

In logical and complex problems, the second painter (small recursive model) often wins because they have the opportunity to think and correct, even if they have a smaller brain (parameters).

Conclusion: The Future of AI is Recursive

This research proved that solving hard logical problems is not exclusive to giant language models. Small models with recursive thinking capability (Recursion) can solve problems that large models cannot handle with a single processing pass.

Instead of memorizing data, these models learn how to edit a Canvas over multiple steps until they reach the correct answer.

Key Takeaways from This Research:

- Bigger isn't always better - architecture and thinking method matter more than size

- The ability to review and refine can replace billions of parameters

- Inspiration from the human brain (fast and slow thinking) can lead to more efficient models

- Smaller models can generalize better and are less prone to overfitting

Perhaps the future of AI lies not in trillion-parameter models, but in intelligent models that know how to "think."